Amazon Web Services (AWS) has announced the general availability of Amazon Timestream, a new time series database for IoT and operational applications that can scale to process trillions of time series events per day up to 1,000 times faster than relational databases, and at as low as 1/10th the cost.

The thinking behind Timestream is that it saves customers effort and expense by keeping recent data in-memory and moving historical data to a cost-optimized storage tier based upon user-defined policies, while its query processing gives customers the ability to access and combine recent and historical data transparently across tiers with a single query, without needing to specify explicitly in the query whether the data resides in the in-memory or cost-optimized tier.

The analytics features provide time series-specific functionality to help customers identify trends and patterns in data in near real time. Because the database is serverless, it automatically scales up or down to adjust capacity based on load, without customers needing to manage the underlying infrastructure.

Becoming cost effective

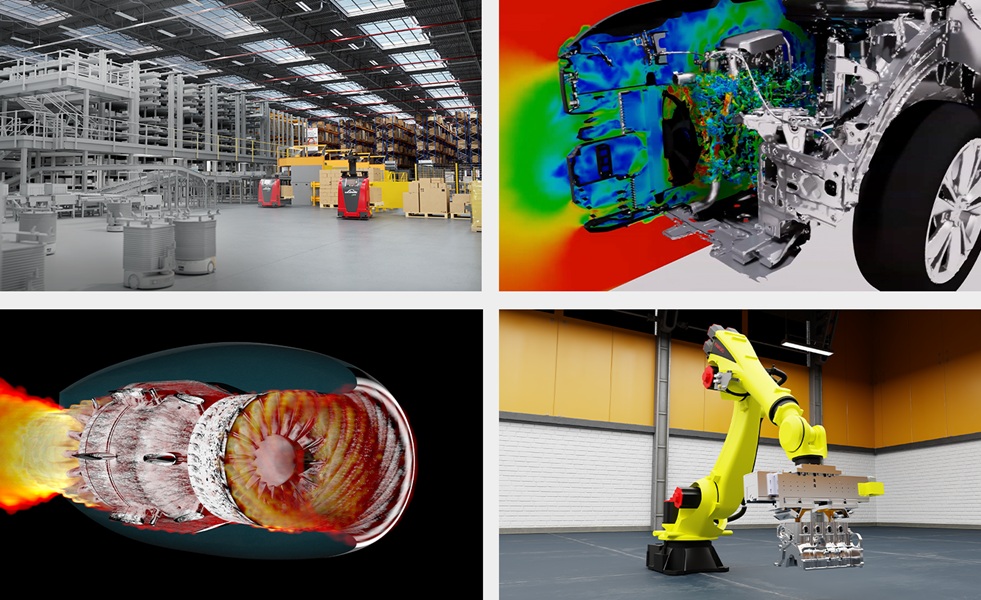

Today’s customers want to build IoT, edge, and operational applications that collect, synthesise, and derive insights from enormous amounts of data that change over time (known as time series data). For example, manufacturers might want to track IoT sensor data that measure changes in equipment across a facility, online marketers might want to analyse clickstream data that capture how a user navigates a website over time, and data center operators might want to view data that measure changes in infrastructure performance metrics.

This type of time series data can be generated from multiple sources in extremely high volumes, needs to be cost-effectively collected in near real time, and requires efficient storage that helps customers organize and analyse the data. To do this today, customers can either use existing relational databases or self-managed time series databases.

Neither of these options are attractive. Relational databases have rigid schemas that need to be predefined and are inflexible if new attributes of an application need to be tracked. For example, when new devices come online and start emitting time series data, rigid schemas mean that customers either have to discard the new data or redesign their tables to support the new devices, which can be costly and time-consuming.

Lacking of functions

In addition to rigid schemas, relational databases also require multiple tables and indexes that need to be updated as new data arrives and lead to complex and inefficient queries as the data grows over time. Additionally, relational databases lack the required time series analytical functions like smoothing, approximation, and interpolation that help customers identify trends and patterns in near real time.

Alternatively, time series database solutions that customers build and manage themselves have limited data processing and storage capacity, making them difficult to scale. Many of the existing time series database solutions fail to support data retention policies, creating storage complexity as data grows over time. To access the data, customers must build custom query engines and tools, which are difficult to configure and maintain, and can require complicated, multi-year engineering initiatives.

Furthermore, these solutions do not integrate with the data collection, visualisation, and machine learning tools customers are already using today. The result is that many customers just do not bother saving or analysing time series data, missing out on the valuable insights it can provide.

Addressing these challenges

Amazon Timestream addresses these challenges by giving customers a purpose-built, serverless time series database for collecting, storing, and processing time series data. The database automatically detects the attributes of the data, so customers no longer need to predefine a schema. It simplifies the complex process of data lifecycle management with automated storage tiering that stores recent data in memory and automatically moves historical data to a cost-optimized storage tier based on predefined user policies.

It also uses a purpose-built adaptive query engine to transparently access and combine recent and historical data across tiers with a single SQL statement, without having to specify which storage tier houses the data. This enables customers to query all of their data using a single query without requiring them to write complicated application logic that looks up where their data is stored, queries each tier independently, and then combines the results into a complete view.

In addition it provides built-in time series analytics, with functions for smoothing, approximation, and interpolation, so customers don’t have to extract raw data from their databases and then perform their time series analytics with external tools and libraries or write complex stored procedures that not all databases support. The serverless architecture is built with fully decoupled data ingestion and query processing systems, giving customers virtually infinite scale and the ability to grow storage and query processing independently and automatically, without requiring customers to manage the underlying infrastructure.

“What we hear from customers is that they have a lot of insightful data buried in their industrial equipment, website clickstream logs, data center infrastructure, and many other places, but managing time series data at scale is too complex, expensive, and slow,” Shawn Bice, VP, databases, AWS, said. “Solving this problem required us to build something entirely new. Amazon Timestream provides a serverless database service that is purpose-built to manage the scale and complexity of time series data in the cloud, so customers can store more data more easily and cost effectively, giving them the ability to derive additional insights and drive better business decisions from their IoT and operational monitoring applications.”