Industrial transformation is no longer constrained by physical iteration but by the ability to model, simulate and validate complexity before it exists. The convergence of AI, simulation and real-time data is redefining how factories are designed, operated and continuously optimised.

The language of digital transformation in manufacturing has long been dominated by incremental gains, better visibility, improved connectivity, marginal efficiency improvements. What is now emerging is not an extension of that narrative but a break from it. The centre of gravity is shifting away from the physical factory as the starting point for decision-making and towards a digital environment where those decisions are made, tested and proven before anything is built.

This is not a conceptual shift, it is an operational one. The growing complexity of modern manufacturing, from product variability to supply chain volatility and rising cost pressures, has exposed the limitations of fragmented systems and disconnected workflows. Data exists in abundance, but it is rarely aligned, contextualised or actionable across the full lifecycle of a product or facility. The result is latency in decision-making, duplication of effort and risk that only becomes visible when it is most expensive to resolve.

The evolution of the digital twin is central to addressing this problem, but not in the way it has traditionally been understood. The digital twin is no longer a representation of an asset or a visualisation layer for engineering teams. It is becoming a dynamic operational system, continuously fed by real-world data and capable of simulating outcomes with increasing fidelity. What matters is not the existence of the twin, but the environment in which it operates and the decisions it enables.

The birth of the industrial metaverse

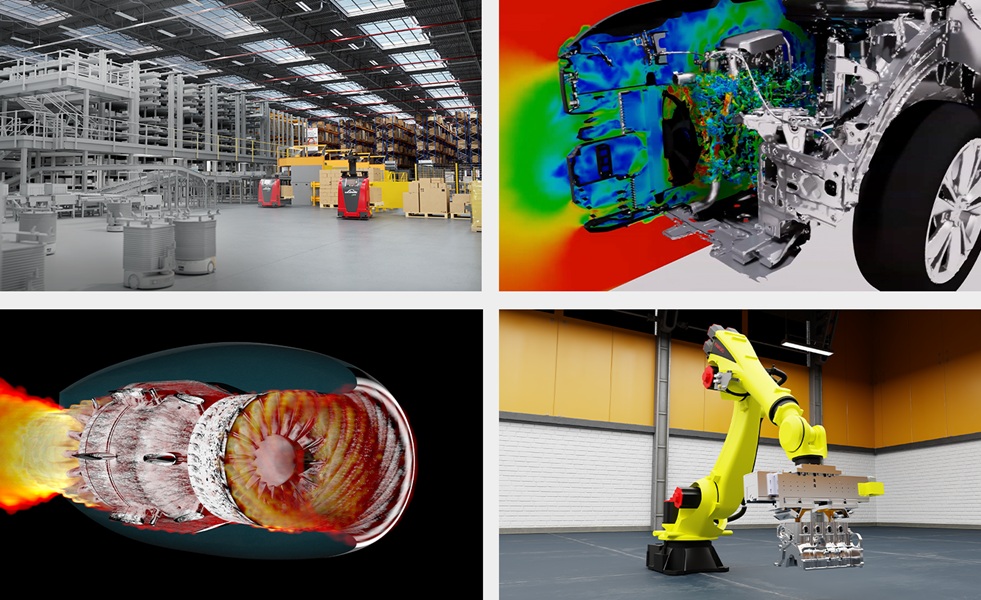

Siemens has framed this shift through its Digital Twin Composer, positioning it as a foundation for what it describes as the industrial metaverse. The terminology is less important than the intent. The objective is to bring together design, engineering and operations into a single, persistent environment where the entire lifecycle of a product, process or facility can be modelled, tested and optimised in context.

At its core, Digital Twin Composer addresses a structural issue that has persisted for decades. Engineering disciplines operate in silos, supported by different tools, data models and processes. Design teams create, simulation teams validate, operations teams execute, but the connections between them are often indirect and delayed. Changes made in one domain are not immediately reflected in others, and the feedback loop between design and reality is slower than it needs to be.

By combining 2D and 3D digital twin data with real-time operational inputs, Digital Twin Composer collapses these boundaries into a single living model. The implication is not simply improved collaboration, but a reduction in the time between decision and validation. Iteration becomes faster, and the cost of error decreases because issues can be identified and addressed before they manifest physically.

This is where simulation begins to move from a supporting capability to a central operating principle. Traditionally, simulation has been constrained by computational limits and disconnected data, used selectively rather than continuously. What is now emerging is an environment where simulation is persistent, embedded within the workflow and directly linked to real-world conditions.

Through integration with platforms such as NVIDIA Omniverse, Digital Twin Composer enables physically accurate, photorealistic environments where entire factories and supply chains can be recreated with high fidelity. This allows organisations to explore scenarios, test configurations and validate decisions in a virtual space before committing resources in the physical world.

Reducing the cost of failure

The shift is not just technical, it is economic. When decisions can be validated in simulation, the cost of failure is significantly reduced. Capital expenditure can be optimised because investments are tested and refined before implementation. Time to market accelerates because design cycles are compressed and bottlenecks are identified earlier. The factory, in effect, is proven digitally before it exists physically.

The collaboration between PepsiCo and Siemens provides a clear example of how this translates into operational reality. By creating high-fidelity 3D digital twins of manufacturing and warehouse facilities, PepsiCo has been able to simulate plant operations and end-to-end supply chain dynamics with a level of detail that fundamentally changes how decisions are made.

Within weeks, teams were able to optimise configurations and validate new approaches, establishing a performance baseline that reflects both design intent and operational reality. The reported outcomes are not marginal. Throughput increased by 20 percent in initial deployments, while design validation approached near completeness before any physical implementation.

More significant is the ability to identify up to 90 percent of potential issues before changes are made in the physical environment. This represents a shift in how risk is managed. Problems that would traditionally emerge during commissioning or operation can now be addressed in advance, reducing disruption, cost and time lost to rework. At the same time, reductions in capital expenditure of between 10 and 15 percent point to a different approach to investment, one that is informed by simulation rather than assumption.

From visibility to intervention

Many digital initiatives have focused on visibility, providing organisations with dashboards and insights into their operations. While valuable, visibility alone does not drive change. The ability to act on that insight, quickly and with confidence, is what defines operational advantage.

Digital Twin Composer extends beyond visibility into intervention. By integrating real-time data from manufacturing execution systems, quality platforms, programmable logic controllers and industrial IoT environments, it creates a dynamic system where decisions can be tested, validated and executed within the same context. The digital twin becomes not just a mirror of reality, but a mechanism for shaping it.

This becomes particularly powerful when combined with AI. AI agents can operate within the digital environment, simulating scenarios, identifying inefficiencies and recommending actions. Those actions can be validated before being applied, creating a feedback loop where learning and optimisation occur continuously. The factory evolves not through periodic upgrades, but through constant refinement.

This is the point at which industrial intelligence begins to take on a more concrete meaning. It is not simply about analysing historical data or automating individual processes. It is about creating a system where data, simulation and AI converge to inform decisions in real time, across the entire lifecycle of a product or facility.

Where complexity is resolved

Modern manufacturing is defined by complexity, not only in the scale of operations but in the interdependencies between systems, processes and external factors. Supply chains are more dynamic, product lifecycles are shorter and sustainability requirements are more demanding. Managing this complexity through traditional means is increasingly untenable.

The value of Digital Twin Composer lies in its ability to provide a unified environment where this complexity can be modelled and understood. By bringing together physical AI, simulation and real-time data, it enables organisations to move from reactive problem-solving to proactive optimisation. Decisions are no longer based solely on what has happened, but on what is likely to happen under different conditions.

This has direct implications for sustainability and efficiency. Energy consumption, material usage and process performance can be evaluated and optimised within the digital environment before changes are implemented. The ability to test scenarios at scale allows organisations to balance performance, cost and environmental impact in a more systematic way.

What emerges is a different starting point for manufacturing. The process no longer begins with physical construction, followed by testing and adjustment. It begins in the digital domain, where design, validation and optimisation occur in parallel. The physical world becomes the execution layer, informed by a level of insight that was previously unattainable.

The introduction of Digital Twin Composer reflects this broader transition. It is not simply a new tool, but part of a shift towards systems of decision rather than systems of record. The organisations that succeed in this environment will be those that can integrate these capabilities into their operating model, breaking down silos and aligning teams around a shared, continuously evolving representation of their operations.

The factory, in this context, is no longer a fixed asset. It is a dynamic system, shaped as much by its digital counterpart as by its physical form, and continuously refined through the interaction between the two.