Digital twin technology is fast becoming foundational to manufacturing operations. Still, without connectivity, immersive visualisation, and strategic foresight, even the best tools risk becoming isolated replicas rather than real-time, real-world assets.

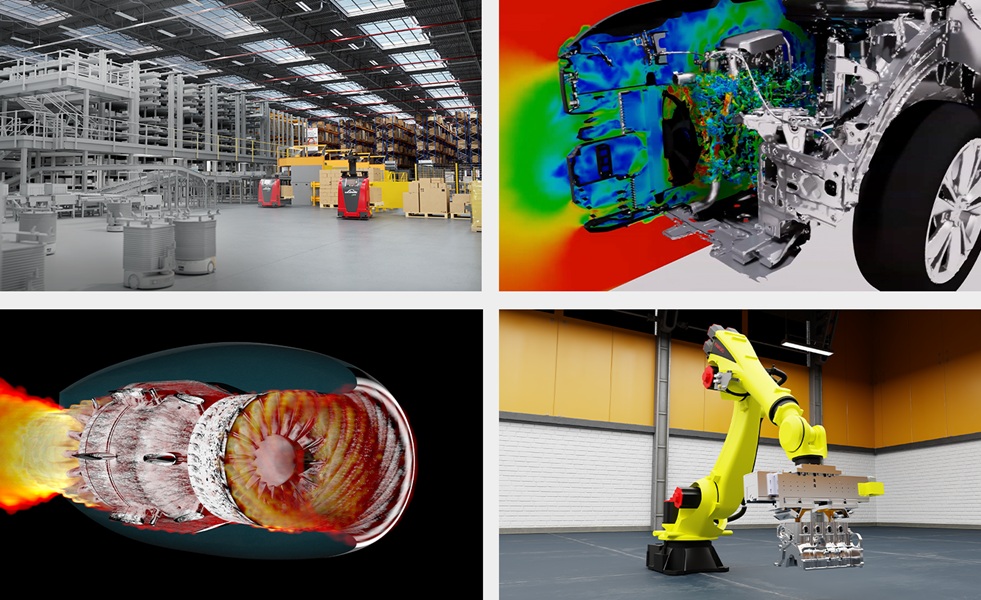

Digital twins have long been positioned as the key to unlocking smarter, more agile industrial operations. Their origins lie in engineering design and complex system simulation, where a digital replica of a physical object or process could help test theories, avoid failures, and iterate on design decisions in virtual space. In early deployments, the emphasis was on mirroring, a static or semi-static model, useful for analysing behaviour but limited in dynamic responsiveness.

Yet manufacturing is no longer operating in a static world. Systems evolve in real time. Data flows are continuous. Equipment, workers, and machines must adapt on the fly. In this environment, manufacturers require digital twins that do more than simulate. They must reflect the live state of operations, ingest real-time inputs from connected devices, and support decision-making across supply chains, product lines, and customer touchpoints. For that to happen, digital twins must evolve beyond simulation into fully integrated digital infrastructure.

This shift is not only technical but strategic. A digital twin that fails to interact with its environment, respond to inputs, or support organisational goals becomes a bottleneck rather than a breakthrough. The real opportunity lies in connecting operational, behavioural, and contextual data into a single, navigable interface that informs and accelerates action. That requires rethinking how twins are built, deployed, and scaled.

Connectivity is not a given

Despite the assumption that digital twins are, by definition, ‘connected,’ the reality is often quite different. Many deployments operate in partial or fragmented environments. Machines are connected but not monitored. Sensors stream data that is not integrated. And perhaps most critically, the state of device connectivity itself is often unknown. Luc Vidal, Head of M2M/IoT Business at BICS, warns that this blind spot can severely undermine the effectiveness of digital twins.

“Digital twins create virtual replicas of systems and objects, but they do not give manufacturers the complete picture of potential faults and inefficiencies,” he explains. “They are missing the connectivity component and leaving a lot of intelligence on the table.”

This gap becomes particularly acute at scale. In a factory with hundreds or thousands of IoT-enabled devices, manually monitoring each device’s connectivity status is no longer feasible. The difference between no connectivity and poor connectivity is critical yet often invisible. A machine that drops its signal intermittently may appear functional but fail to deliver key performance data at crucial moments.

Vidal points to the concept of the ‘connectivity twin’ as a solution. By virtually cloning the SIM or eSIM of each device, manufacturers can gain real-time visibility into connectivity performance across the entire IoT estate. This additional layer of data enables faster troubleshooting, better resource allocation, and, ultimately, higher reliability across operations. “Time is money, after all, and no manufacturer wants poor connectivity slowing down their time to market,” he adds.

This becomes especially important in applications such as fleet management, remote diagnostics, and battery-powered Internet of Things (IoT) systems. Without accurate, up-to-the-minute data on network availability, signal strength, or roaming behaviour, predictive analytics and condition-based maintenance quickly lose their edge. Integrating connectivity twins into the broader digital twin architecture ensures that the system remains grounded in operational reality.

Visual complexity must meet human clarity

Even the most connected and data-rich digital twin can fall short if it fails to communicate meaning effectively to its users. As digital twins grow in complexity, modelling not only physical systems but behavioural logic, customer interactions, and lifecycle metrics, the demands on their visual interfaces increase exponentially.

Patrick Thurman, Commercial Product Lead at Qt Group, believes that visual fidelity and responsiveness are no longer optional. “Digital twins have become essential in industrial automation,” he notes. “But they are also getting more complex, so they are increasingly requiring more and more sophisticated visualisation capabilities to represent all that data in a digestible way.”

This is not simply a matter of aesthetics. The ability to quickly parse trends, identify outliers, and explore ‘what if’ scenarios depends on how well the interface surfaces meaning. High-performance 2D graphics, interactive dashboards, and immersive 3D environments are tools for operational insight. In manufacturing contexts, these capabilities allow engineers to model production lines, simulate process changes, and monitor equipment behaviour from a centralised control point.

Beyond analytics, visualisation plays a critical role in training and onboarding. A responsive, real-time digital twin interface enables maintenance teams to visualise failure modes, production managers to rehearse line changes, and frontline operators to understand the impact of their inputs. “You are helping people get up to speed faster and more quickly understand what is happening to the device or on the production line,” says Thurman. “That is a significant improvement to the manufacturing process.”

This responsiveness must be maintained across a range of platforms. Whether deployed to embedded devices, control room systems, or cloud-based dashboards, digital twin applications must deliver consistent performance, even under demanding real-time data loads. Multi-thread execution, memory efficiency, and cross-platform portability are now essential attributes of the digital twin stack.

Faster cycles demand smarter infrastructure

In industries such as automotive manufacturing, digital twins are being pushed to new limits. Once a domain defined by decades-long product cycles and multi-year development roadmaps, the sector now faces pressure from new entrants, electrification mandates, and the emergence of software-defined vehicles.

Elliot Clarke, UKI Regional Director at PTC, highlights just how much the ground has shifted. “Standard vehicle development time used to be 60 to 72 months,” he explains. “Today, some Chinese carmakers claim they can reduce it to just 28 months.” The average in advanced markets has also dropped significantly, hovering around 32 to 40 months.

Digital twins are part of the reason why. By enabling rapid iteration in early design, validating new architectures virtually, and reducing reliance on physical prototypes, manufacturers can move from concept to launch with unprecedented speed. But Clarke argues that this acceleration must not come at the cost of strategic depth.

“If a vehicle is not connected and its data cannot be analysed in real time, then providing first-class customer-centric services and optimising the development of future car generations becomes challenging,” he explains. “The most forward-looking use of digital twins links engineering and aftersales, simulation and user behaviour, performance and product evolution.”

This feedback loop is where digital twins can create a lasting competitive advantage. Not only do they enable faster product development, but they also support long-term differentiation through customer-centric design, service personalisation, and continuous improvement. For Clarke, this is particularly critical in high-cost manufacturing markets such as the UK, where agility and value-added innovation are essential to staying competitive.

Digital twins must operate in unison

If there is a unifying theme across these perspectives, it is the importance of cohesion. Connectivity data must flow into operational dashboards. Graphical interfaces must accurately reflect the real-time states of the system. Simulation models must adapt to user feedback and live telemetry. All of these elements must work together within a unified architecture, one that spans organisational silos, software environments, and device ecosystems.

“Manufacturers must resist the temptation to build point solutions that look impressive but fail to scale,” warns Thurman. Applications must be portable across hardware platforms, integrated with standard protocols, and future-proofed against evolving use cases.

Vidal echoes this with a focus on data coherence. Enterprises often deploy separate device and connectivity management platforms, resulting in fragmented visibility. Merging these into a single pane of glass makes operational intelligence more accessible and actionable. Meanwhile, Clarke points to the importance of aligning digital twin initiatives with broader organisational goals. If deployment is driven purely by engineering or IT, without engagement from product, operations, and customer teams, the full value remains unrealised.

Rethinking the role of digital twins

The next generation of digital twins will not be measured by how accurately they reflect physical systems but by how effectively they support operational agility, strategic decision-making, and customer engagement. That means asking different questions during implementation. What insights will this twin generate? How will it integrate with existing systems? Who will use it, and how will they use it?

It also means shifting the conversation from technology to capability. Connectivity twins, immersive 3D interfaces, and integrated architectures are not features; they are essential components. They enable new ways of working. They support the move toward predictive maintenance, responsive manufacturing, and outcome-based business models. But only if deployed with clarity, ambition, and cross-functional commitment.

Digital twins will not solve operational challenges on their own. However, when implemented with strategic intent, they can become the nervous system of the digital enterprise, connecting perception with action and simulation with value.