CTS spoke to Greg Bentley, CEO of Bentley Systems, about the evolution of the digital twin and its implications for managing assets in manufacturing

How has your view of digital twins changed over the years?

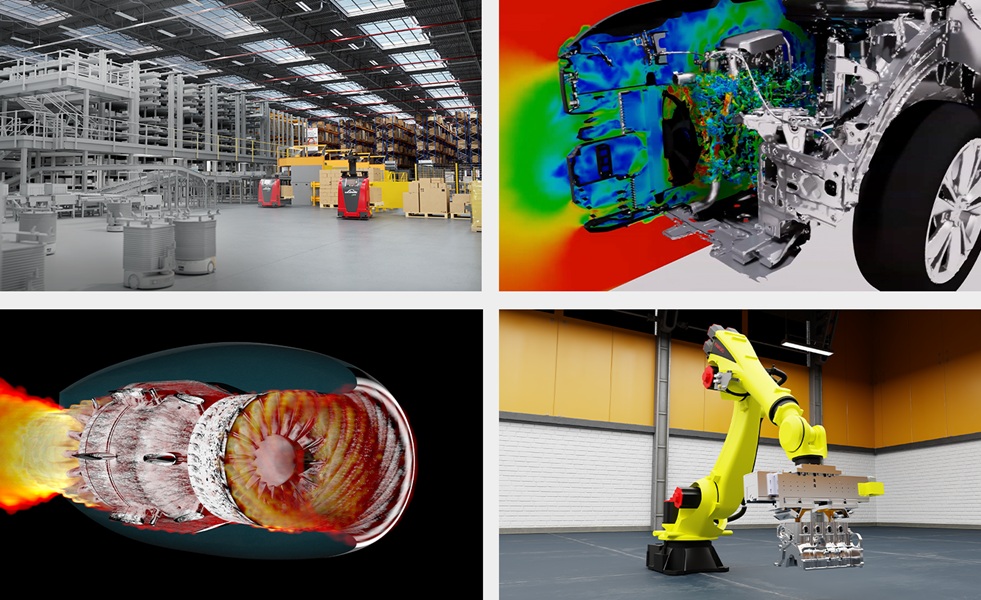

Perhaps our view is parochial and comes from a vendor’s standpoint. When digital twins first appeared, they were used for product designs and simulation, but those are notional. If we think about infrastructure, assets, and plants these have a specific physical lifecycle that must be matched by its twin; in our connotation it would have its own lifecycle. That is a more ambitious undertaking, to include the reality aspect of it.

An example from our work with Siemens in the process automation world came at their Karlsruhe facility. They had 3D models for the as-designed and as-built versions of the plant, but those were not the same. Then, when we captured the physical plant, it was not the same as either. So, a digital twin cannot just be digital, it must be a true twin. The requirement is reality, which is very challenging when you’re talking about an infrastructure asset, like a plant.

But, beyond that it must be a useful digital twin; it must have some explanatory value, rather than simply being a product simulation. It must have veracity. It needs to link digital components so that the behaviour of the asset can be predicted.

The third element after reality and veracity is when we talk about an infrastructure asset’s fidelity. Over its lifecycle, it will change, and one must be able to capture and understand that as well. That’s a tall order but I think we’re there now, but it took time to bring together some interesting technologies to get there.

What technologies have been developed that enable the digital twin to reach its potential?

We’ve had to bring together the confluence of three of them and they correspond to these three requirements of reality and veracity and fidelity. First, for reality, the technology we’ve brought to bear on what we call reality modelling, is ContextCapture. It is what enables one to create a reality mesh, which is an engineering-ready 3D model of an existing asset from merely overlapping photographs. For plants, two years ago we introduced the capability for imagery to include a laser scan along with photography so that you could go through the plant with a drone mounted with a video or handheld camera and supplement it with laser scans only done where you have darkness or reflection.

That is all processed into a mesh to deliver the reality model that is ready for the engineering software.

Second, we then use machine learning on the reality mesh to recognise and classify the digital components that are cross referenced into the veracity model; what we call the digital DNA of the plant that is captured in engineering models. These can be 3D or 2D, but they bring together the digital components so they can be classified and cross referenced in what we call an open, connected data environment. This digital alignment is when they are semantically related together, they are described and tagged the same way.

The third aspect is the fidelity. You need to synchronise the changes that occur so that it can remain a twin over its lifecycle. If it once was a twin, but it is not now, then it would be dangerous to assume it can be used for immersive visualisation analytics. We use a cloud service called a distributed change ledger that allows you to move forward and backward on a timeline.

Those are the technologies that it has taken for us to enable what we now think can be accorded the status of a digital twin for infrastructure or planning.

What do you expect from a modern digital twin?

First let me say that it would need to be enabled by a cloud service where you can be immersed in the environment of the plant. In this immersive, intuitive environment you would expect to be able to find the operational technology (OT), the IoT inputs of the plant. You would expect to be able to navigate intuitively and find the information technology (IT), the transaction systems and access the records and documents. And, you need to have the engineering technology inputs – so OT, IT, and engineering technology (ET).

You need to bring those together; the digital twin is comprised of all of those. And when you have it all together you can have immersive visualisation – this can be mixed reality (MR), augmented reality (AR), or virtual reality (VR).

You want to be able to run analytics against this confluence of OT, IT, and ET. This allows analytics to utilise digitally aligned data to perform simulations, studies, and comparisons across a plant asset and a fleet of plant assets.

What is dark data and why is it a challenge?

Every plant has been engineered and is operated with intelligent tools and if not 3D at least 2D models that reflect the intentions and behaviours that the plant engineers used in design and construction. But even though that was done with intelligent tools, the result from the standpoint of visibility to the plant owner is that it is generally a dumb blob. It could be opened by the originating software but from a standpoint of data analytics, it’s dark.

That is a solvable problem, but it requires being able to bridge the different vendor platforms and proprietary solutions semantically. The data will be dark until you bridge and create a digital alignment in a common platform. We call this opening up dark data. It was created intelligently, bought and paid for and has value. We think our role is to provide open source toolkits so that the owners can perform analytics with this dark data.

What about the process of managing change? How is this accomplished?

That is through the connected data environment. When something changes it is checked in and checked out of the IT environment. At that time, we can create what we call a change ledger and then make that available for synchronization. You do not need to be informed of every change, so you have got to be able to batch those up through a hub and make them available to be synchronized when required.

The dynamic aspect is key for the lifecycle of a digital twin. What we want to move toward is a time machine slide that can show what was done in the past. What did it look like during construction? And if you could slide it into the future to say, if we don’t maintain it, what will the failure modes be? Not all of that is realisable, but that’s the notion in our mind that we want to move toward.

What are the advantages of a fully functional digital twin?

If you imagine the things you could do if you could have immersive visualisation so that you could intuitively find all the information in the plant whether it be IT, OT, or ET, you could bring your analytics to bear because it is not dark data anymore. That is the way I conceptualise it over the lifecycle of a plant is to say these automated digital workflows now become possible.

What do you mean by resilience twin?

When we think of infrastructure, we need to consider the risks – events such as power outages, floods, and seismic activities. An example is the construction of a digital twin for the Shell Pennsylvania Petrochemicals Complex, a major petrochemicals plant that will process ethane from shale gas. It is a large construction project where they fly drones every day during construction. They have 6,000 people working on the site. But the resilience aspect for that digital twin, they now are taking advantage of our flood modelling software because it sits where two rivers meet. Over the construction period they are very likely to have flooding and they need to be able to simulate this at any stage.

In other instances, it might be seismic resilience that you would need during construction. But even when the plant is operating you need the same resilience to weather conditions.

Why is it important to have open-source software?

In our observation of cloud services architectures, generally we would say open wins. You can see that in other domains. When it comes to engineering, any operator that is big enough to operate a process plant will have resources that are skilled and ready to be targeted at important problems. We think open-source environments, which the people who work on artificial intelligence or machine learning are comfortable with, are the best solution. We do not know what the most valuable and the highest priority applications will be from one owner to the next, so we do not want to get in the way of their prioritisation. Having an open-source tool kit is our way of saying that our priority is to open up the data and then they can take it from there.

How important is asset plant management?

For every operating plant, the owner has invested in the work of engineers and they had standards and specifications. However, they are not getting the benefit that could be provided by being able to use that ET along with the OT and the IT in a digital twin. There is a lot of plant instrumentation that is going to generate a lot of data insights. With PlantSight we are going to put that together with the digital DNA.

PlantSight enables as operated and up-to-date digital twins, which synchronise with both physical reality and engineering data, creating a holistic digital context for consistently understood digital components across disparate data sources, for any operating plant. Plant operators benefit from high trustworthiness and quality of information for continuous operational readiness and more reliability.

Where next for digital twins?

Cloud services get better and better and richer and richer all the time. What is great with digital twins is we are taking advantage of advancements that are occurring in computing environments and computing technologies in general. When we talk about immersive visualisation today we think of that as 3D on screens, but Microsoft has just released its HoloLens 2 to move this forward. We were part of the release, and the industrial applications they were showing were for our construction 4D modelling. The HoloLens 2 is not for gaming, it’s for industrial applications including process plants. We do not have to invent these technologies; they are being driven by advances on the consumer side.

CV: Greg Bentley, CEO, Bentley Systems

Greg Bentley is the CEO of Bentley Systems, the global leader dedicated to providing engineers, architects, constructors, geospatial professionals and owner-operators with comprehensive software solutions and cloud services for advancing infrastructure. Mr. Bentley holds an M.B.A. in Finance and Decision Sciences from Wharton. He is a trustee of Drexel University, where he also serves as Chairman of the Advisory Board for the Pennoni Honors College. In 2013, Bentley was elected to the National Academy of Construction, and in 2018 he was named a Fellow of the Institution of Civil Engineers in the United Kingdom.